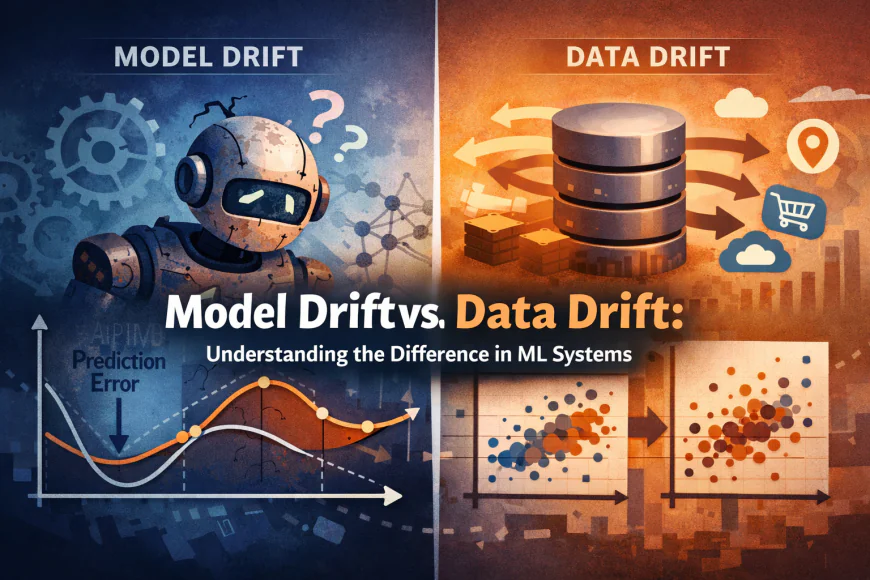

Model Drift vs. Data Drift: Understanding the Difference in ML Systems

Learn the key differences between model drift and data drift in machine learning. Understand causes, detection methods, real-world examples, and how to prevent

Machine learning and artificial intelligence are rapidly evolving, where deploying a model into the production environment is not the end of the project, but actually, it is the beginning. Once the models are deployed, they work in a very dynamic environment, where the data and real-world conditions often change continuously. As the environment changes, models can behave in an unpredictable way or can perform poorly. The two key reasons behind this shift from normal functioning are data drift and model drift.

Surveys show that 78% of ML deployments that experienced drift reported significant negative business impact, often resulting in measurable revenue loss or operational inefficiencies. (Source: Virtue Market Research)

These two terms are often discussed together, but they have distinct issues that impact the performance of the machine learning system and its accuracy. Therefore, it is essential to understand both so that you can design a robust production AI model and eliminate associated risks.

What is Data Drift?

Let's first understand what data drift is. Data drift refers to the changes in the statistical properties of the input data as compared to the data the model has been trained on. In simple terms, the features or metrics that the model sees in production begin to change from what it expects.

Data drift can also occur if the underlying relationships between inputs and outputs have not changed.

For example, consider a machine learning model trained on user demographic data in which the average age of users was 40. Over time, if the age of active users becomes significantly higher, the input feature distribution will shift. Here, the target behavior of the model has not changed, but still, the performance will not be accurate because the model sees unfamiliar patterns.

Key reasons why data drift happens:

• Customer behavior and preferences evolve over time

• Seasonal trends or other external events

• Changes in data collection or sensor systems

• Change in market or demography

By using statistical tools that compare new data against baseline distributions, professionals can easily measure data drift. There are techniques like the Population Stability Index (PSI), Kullback-Leibler divergence, and Kolmogorov-Smirnov tests that are used to detect drift in input features.

What is Model Drift?

Data drift is concerned with inputs, whereas model drift (also known as concept drift) is about a change in the relationship between input features and the target outcome, i.e., the thing the model is trying to predict.

Also, even if the data are somewhat similar in distribution, what the model has been trained on may no longer hold true.

Here is an example of a credit scoring model designed to predict default risk. If a recession occurs and economic conditions suddenly worsen, then the patterns that previously linked features like income and credit history to default behavior may no longer be reliable.

The model’s logic will start to break down and give poor predictions even though the distribution of features is not drastically changed.

Consider model drift as an umbrella term that encompasses other subtypes of drift, such as:

• Concept drift: statistical relationship between features and label changes

• Label drift: the distribution of the target variable itself changes

• Model parameter drift: in this, changes in model parameters lead to changes in performance unintentionally.

Model Drift vs. Data Drift: Key Differences

|

Aspect |

Data Drift |

Model Drift |

|

Focus |

Change in input data distribution |

Change in relationship between inputs & outputs |

|

Cause |

External shifts in data patterns |

Real-world condition or target dynamics changing |

|

Detection |

Statistical tests on features |

Performance monitoring over time |

|

Example |

Shift in user age demographics |

Changed customer behavior affecting outcome |

|

Response |

Update training data or preprocessing |

Retrain the model with new ground truth |

Data drift changes what the model sees, whereas model drift changes what the model needs to learn. Remember, data drift can also lead to model drift, and the model’s performance can also deteriorate if there are concept-level changes without shifts in raw input distributions.

How to detect and respond to drifts?

Detecting Data Drift

Data professionals and machine learning engineers can detect data drift by analyzing the statistical properties of input data. This includes:

- Comparing feature distributions in real time with training data

- Tracking schema changes

- Using divergence metrics like PSI or KL divergence

Detecting Model Drift

Model drift can be detected easily by monitoring performance. For this:

- Track model metrics such as accuracy, precision, recall, etc., on recent data

- Compare predictions with verified outcomes

- Use sliding time windows, as they will help detect sustained performance drops

Responding to Drift

Once you detect the drift, here are a few ways to address it:

- Retraining the model with recent and contextual data

- Re-doing feature engineering and model architecture

- Automating retraining

With proper monitoring and automating pipelines, you can ensure models are relevant for real-world conditions.

Conclusion

Model drift and data drift are among the most common challenges for the maintenance of machine learning systems in production. While data drift is about issues related to changes in input distributions, model drift is because of changes in the learned relationships between features and outcomes. A model’s performance can be degraded because of these.

Data drift and model drift require different techniques to detect and mitigate them. However, through continuous monitoring and proper drift management strategies, data professionals can ensure the AI models are accurate and reliable.